What questions to ask for survey: a practical guide to crafting effective questions

Learn how to determine the right survey questions, choose types that fit your goals, pilot test effectively, and avoid bias. A complete how-to for designing surveys that yield actionable insights.

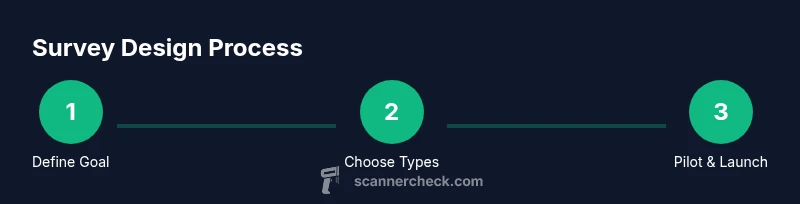

To know what questions to ask for a survey, start with your goals, map the respondent journey, and choose question types that align with your measurements. Draft concise items, pilot test with a small group, and refine before launch. This approach keeps data clean, actionable, and easy to analyze. Focus on relevance, avoid bias, and plan for analysis from the start.

Foundations: What makes a good survey question?

According to Scanner Check, the best survey questions start with a clear goal and end with measurable outcomes. Before writing a single item, define what you want to learn (the decision your survey will inform) and who will answer. Translate each goal into one or more questions, and map each item to a specific data need. Consider the respondent's context: their background, knowledge, and what success looks like for them. When you design with intent, you reduce ambiguity, improve response quality, and speed up analysis. The first rule is relevance: every question should directly contribute to your objective. If it doesn't, drop it.

In this section, we’ll explore how to translate objectives into a question plan, why some questions fail to yield actionable data, and how to avoid common traps such as ambiguity, jargon, and loaded language. The Scanner Check team found that surveys succeed when there’s a tight link from goals to questions to analysis plan. A well-structured start helps you avoid scope creep and ensures you collect data you can actually use in decision making. You’ll also learn how to balance breadth and depth so respondents stay engaged while you gather enough insight to inform your decisions.

Question types and when to use them

Different question types serve different purposes. Use dichotomous (yes/no) questions for quick screens or gating. Use multiple choice with single answer for categorical data; ensure every option is exhaustive and mutually exclusive. Use Likert scales to measure attitudes, agreement, or satisfaction; keep the scale neutral (e.g., 1–5) and define each point. Use open-ended questions to capture nuance, ideas, and context that fixed choices miss; provide prompts to guide responses. Semantic differential scales can quantify perceived intensity across opposing attributes (e.g., easy vs. difficult). Matrix questions save space but risk fatigue; use them sparingly and group related items.

Map each question type to your outcome: screening questions filter respondents, core questions measure outcome, and optional questions gather context. For practitioners, a common approach is to draft a core battery of 6–12 items tied to your primary outcomes, then add 2–4 exploratory questions. Always prepare response options with explicit, non-overlapping meanings and anticipate missing data by including a 'not applicable' or 'prefer not to say' option where appropriate. The goal is analysis readiness: predefine how you'll code responses, what counts as a valid answer, and how you’ll aggregate data across segments. In practice, you’ll iterate on wording and order to minimize bias and measurement error.

Wording and clarity: Avoid bias and ambiguity

Clear wording reduces misinterpretation and increases data quality. Use simple, concrete language; avoid jargon, acronyms, or specialized terms the intended audience may not understand. Frame questions in the present tense and avoid leading terms that push respondents toward a particular answer. Do not combine two ideas in one question (double-barreled items). For example, instead of: Do you approve of the product and its support, answerable as yes or no—split into two questions so you can analyze each item separately. Provide balanced response options and ensure a neutral opening. Do not assume a respondent has experience with your product or service; offer a brief context if needed.

When wording is clear, you minimize misinterpretation and boost respondent trust. Pilot tests are essential to catch jargon, ambiguous terms, and cultural nuances. Remember that every word matters: a tiny shift can change how someone answers and how you interpret data. This is where the Scanner Check approach to plain language pays off—consistency in terminology helps you compare results across groups and over time.

Structure and order: Build a smooth flow

A well-ordered questionnaire minimizes drop-off and improves data quality. Start with a warm, non-sensitive screener to identify eligible respondents. Group related items into sections with clear headings and consistent formatting. Place high-burden questions later, after you’ve built rapport. Use early questions to establish relevance and reduce instinct to skip. Consider skip logic to tailor the flow based on prior answers, which keeps the survey shorter for some respondents while collecting deeper data from others. Pre-test the order to catch awkward transitions.

At the end, include a brief confidence statement and an option to save progress if possible. Limit the number of mandatory questions to reduce fatigue; when a question must be answered, mark it as required with care to avoid forcing bad data. Finally, ensure accessibility: provide alt text for any images and keep font size legible.

Pilot testing and validation

Pilot testing is essential before launch. Run a small, representative sample through the full instrument to identify ambiguities, missing answer options, and technical glitches. Conduct cognitive interviews or think-aloud sessions to understand how respondents interpret each item. Check response rates, time to complete, and whether any questions consistently cause confusion. Use pilot results to revise wording, options, and order. After revisions, run a second mini-pilot if major changes were made. Document decisions so you can justify changes during analysis.

Starter templates: ready-to-use question sets

The following templates can jump-start your survey design. Template A focuses on customer satisfaction: 5-point Likert items for product quality, delivery experience, and support; one or two open-ended prompts for context. Template B targets employee engagement: items on motivation, communication, autonomy, and alignment with company goals; include an open-ended question for feedback. Template C is for market opinion: product awareness, growth interest, and preferred channels; add dichotomous screening questions to segment respondents. Adapt wording to your brand voice, and ensure your response options cover all plausible answers. Remember to pilot test and refine before deploying widely.

These templates are starting points. Tailor language to your audience, define your answer options with precision, and ensure each item maps back to your research goals. The templates reduce drafting time while preserving methodological rigor. After you customize them, run a short pilot to verify comprehension and timing, then finalize with any necessary edits.

Ethical considerations and accessibility

Respect respondents’ privacy and obtain informed consent. Explain why you’re collecting data, how it will be used, and who will have access. Provide an option to decline and avoid collecting personally identifiable information unless strictly necessary. Ensure materials are accessible: use plain language, provide translations if needed, and support screen readers with proper labels. If your survey includes images or multimedia, provide alt text and ensure captions are available. Finally, monitor response bias and be transparent about limitations in analysis; clarity builds trust and improves participation rates.

Tools & Materials

- Online survey platform(Choose a tool that supports skip logic, branching, and data export (CSV/Excel).)

- Question design checklist(Use to evaluate clarity, bias, length, and option exhaustiveness.)

- Pilot test group(Small, representative sample (5–15 people) for initial feedback.)

- Consent and privacy templates(Include a clear purpose statement and data usage details.)

- Accessibility guidelines(Plain language, alt text, high-contrast options.)

- Data analysis plan(Define coding, scoring, and how results will be summarized.)

Steps

Estimated time: 60-120 minutes for design; plus 1–2 weeks for data collection and initial analysis

- 1

Define goals and outcomes

Identify the decision the survey will inform and the key metrics you need. Write one clear objective per outcome and ensure every item maps to a data need. This sets the backbone of your survey and guides the rest of the design.

Tip: Make goals specific, observable, and testable; misalignment here cascades through the rest of the survey. - 2

Map respondent paths

Sketch the respondent journey from invitation to response. Determine who should answer which questions and where skip logic should apply. This helps keep the survey concise for some and richer for others.

Tip: Use a simple flow diagram to visualize branches and data capture points. - 3

Choose question types

Select one or more formats for each data need (e.g., dichotomous for screens, Likert for attitudes, open-ended for context). Ensure options are exhaustive and mutually exclusive where possible.

Tip: Limit the number of scales to reduce cognitive load and improve comparability. - 4

Draft questions with examples

Write items clearly, avoiding jargon. Start with easy topics, then progress to sensitive or complex areas. Include prompts for open-ended responses to guide reflection.

Tip: Keep each item to one idea; avoid double-barreled questions. - 5

Review and pilot test

Conduct an internal review, then a small pilot with 5–15 participants. Collect feedback on clarity, timing, and perceived bias. Revise questions, options, and order accordingly.

Tip: Ask pilot participants what they found confusing or irrelevant. - 6

Finalize and deploy

Incorporate final edits, run a second quick pilot if major changes were made, and prepare metadata for analysis. Confirm accessibility, privacy disclosures, and consent are included.

Tip: Document all changes with rationale for future auditing.

Common Questions

What is the best order for survey questions?

Start with screening questions, group by topic, and place sensitive items later. Maintain a logical flow to keep respondents engaged and reduce drop-off. Test the order in a small pilot to catch awkward transitions.

Begin with screens, group topics, and place sensitive items later. Test the order in a small pilot to keep respondents engaged.

How many questions should a survey have?

There’s no universal rule. Aim for 5–15 core questions for a quick completion, plus 2–4 optional items for context. Adjust based on your audience and the complexity of what you’re measuring.

Aim for 5 to 15 core questions, with a few optional items for context. Adjust for your audience.

How can I avoid bias in questions?

Use neutral language, avoid leading words, and separate multiple ideas into individual items. Pretest with a diverse group to catch cultural or linguistic biases before launching.

Use neutral language, separate ideas, and pretest with diverse participants.

How do I test a survey before launching?

Run a pilot with 5–20 participants, collect feedback on clarity and timing, and revise accordingly. Consider cognitive interviews to understand how items are interpreted.

Run a pilot with a small group, gather feedback, and revise. Consider cognitive interviews for deeper insights.

Can I include open-ended questions?

Yes, but limit their number and provide prompts. Plan how you’ll code and analyze qualitative data for meaningful insights.

Open-ended questions are fine in moderation; plan coding and analysis in advance.

Watch Video

Key Takeaways

- Define clear goals before drafting items.

- Match question types to the data you need.

- Pilot test and refine before launch.

- Ensure accessibility and privacy are baked in.