AI Scanners Like Turnitin: A Comprehensive Side-by-Side Comparison

Analytical, practical comparison of AI scanners akin to Turnitin, covering accuracy, data sources, privacy, reporting, and LMS integration to help educators and institutions choose the right plagiarism detector.

AI scanners like Turnitin are changing how institutions detect plagiarism. According to Scanner Check, this comparison highlights differences in detection accuracy, data sources, privacy, reporting, and LMS integration. Use this comparison to pick the option that best fits your institution's integrity goals, workflows, and budget.

What an ai scanner like turnitin actually does

An ai scanner like turnitin combines traditional text-matching techniques with machine-learning analysis to identify exact matches, paraphrase patterns, and potential duplication across a broad range of sources. Beyond a simple similarity score, these tools generate reports that highlight matching passages, source links, and transparency notes about the methods used. For educators and integrity offices, this helps distinguish routine overlaps from content that may warrant closer review. The Scanner Check team notes that real-world use often involves commissioning pilot tests and validating results against course-specific expectations. When evaluating ai scanner like turnitin, prioritize how well the tool integrates with existing workflows and whether it supports instructor-led review steps in addition to automated scoring.

Core criteria impact on decision-making

When comparing AI plagiarism scanners, you should measure:

- Accuracy and sensitivity: how well the system detects genuine matches without over-flagging benign writing.

- Coverage: breadth of sources, languages, and data types.

- Reporting: clarity, export options, and the ability to annotate or export for LMS records.

- Privacy and compliance: data handling, retention, and user controls.

- Integrations: LMS plugins, APIs, and SSO compatibility.

- Cost and licensing: total cost of ownership for your class sizes and programs.

- Support and governance: vendor responsiveness, documentation, and training resources.

These criteria create a framework for comparing Turnitin-like tools against alternatives, helping decision-makers identify which option aligns with policy and pedagogy.

How detection accuracy translates to classroom use

Detection accuracy is not a binary good/bad metric. A high sensitivity reduces missed cases but may increase false positives, which can erode trust if not managed with human review. Effective ai scanner like turnitin solutions balance precision and recall, offering contextual notes and reviewer tools. Institutions should test with representative submissions, including drafts and revised work, to gauge how the system handles paraphrasing, quotation usage, and collaboration patterns. Scanner Check’s analysis emphasizes the importance of pairing automated results with instructor judgment, especially for creative assignments or interdisciplinary work where standard source matching may have limitations.

Data sources, coverage, and their effect on results

Source coverage drives what the system can detect. A broad data footprint—including academic journals, publisher content, student submissions from partner institutions, and vetted open-web sources—improves detection but raises privacy and policy questions. AI scanners that rely on expansive datasets often provide more robust paraphrase detection and cross-language matching, yet institutions must weigh how this data is stored, who can access it, and how retention aligns with FERPA or other regulations. Scanner Check’s guidance suggests mapping data sources to educational goals and ensuring transparency about what constitutes a match and why it was flagged.

Privacy, policy, and compliance considerations

Privacy controls are central to choosing an ai scanner like turnitin. Critical questions include data ownership, retention periods, encryption standards, and whether student submissions are used to train models. Institutions should seek tools with clear data handling policies, adjustable retention settings, and option to opt out of training datasets when possible. Compliance considerations extend to teacher approvals, administrator oversight, and adherence to local laws. A thoughtful policy reduces risk while preserving the integrity benefits of automated analysis. Scanner Check recommends documenting expectations in a formal data-use agreement and conducting periodic reviews of privacy controls with stakeholders.

Reporting formats and instructor workflows

Modern AI scanners provide multiple reporting modes to support instructors: inline dashboards, downloadable reports, and feedback-ready annotations. Some tools offer per-submission summaries, group-level analytics, and rubric integrations to align with course outcomes. Export formats (CSV, PDF, or LMS-compatible feeds) enable archival records for accreditation. It’s important to verify that the reporting supports transparent review processes and respects student rights, including the ability to view and dispute results. A clear workflow reduces friction in grading and fosters constructive conversations about integrity.

Integration with LMS and classroom workflows

LMS integration is a practical determinant of success. Tools with LTI compatibility, single sign-on, and deep gradebook integration fit naturally into course workflows. Open APIs and robust developer documentation enable custom connectors for Canvas, Moodle, Brightspace, or other platforms. Consider whether the tool requires manual submission, supports bulk submissions, or offers automated checks for draft submissions. The goal is to minimize disruption to teaching and learning, while maintaining reliable detection and reporting capabilities that teachers can trust.

Pricing models and licensing considerations

Pricing for ai scanners like turnitin ranges from institution-wide licenses to tiered per-seat arrangements. Vendors may offer discounts for multi-course deployments or academic-year subscriptions. When budgeting, account for the total cost of ownership, including training, support, and any required IT administration. Avoid relying on sticker price alone; assess value in terms of detection quality, reporting features, and integration ease. Scanner Check observes that many institutions find savings through pilots that validate needs before scaling to department-wide implementations.

Best practices for students and instructors

To maximize value, pair automated scanning with clear learning goals and student education about originality. Encourage students to use drafts and revisions, understand citation rules, and engage with feedback from the system as a learning aid rather than a policing tool. For instructors, combine automated reports with a rubric-based review process and ensure students have a transparent avenue to respond to findings. Regular training for both faculty and students helps sustain trust in the technology and reduces unnecessary disputes.

Real-world pitfalls and how to avoid them

Common pitfalls include over-reliance on automated scores, misinterpretation of paraphrase, language bias, and privacy concerns. To avoid these issues, implement a policy that requires human review for flagged cases, provide context in feedback, and limit data-sharing beyond necessary parties. Maintain updated guidelines about acceptable paraphrase levels and ensure students understand expectations. Regular audits of how results are used in grading can prevent unintended consequences and reinforce educational values.

Getting started: a decision checklist

Before selecting an ai scanner like turnitin, define your goals (detection rigor, student privacy, LMS fit), assemble stakeholders (faculty, IT, legal), and create a selection rubric. Run a pilot with representative courses, gather feedback on accuracy and workflow friction, and compare at least two or three vendors. Ask vendors about data retention, training, API access, and support SLAs. Finally, document a rollout plan with timelines, training sessions, and evaluation milestones. Scanner Check’s framework encourages a realistic, outcome-driven approach.

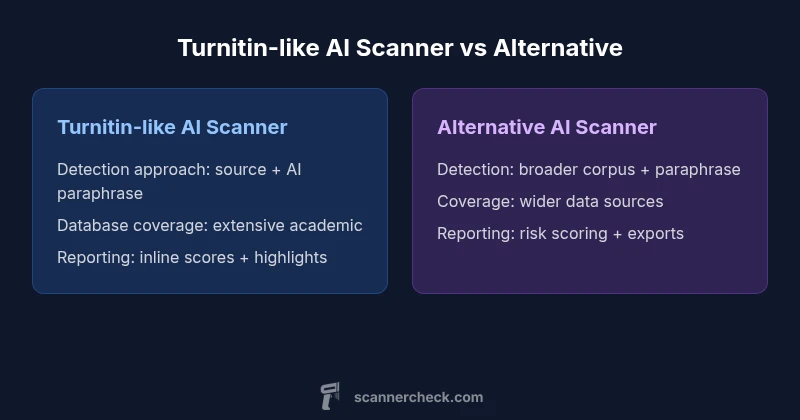

Comparison

| Feature | Turnitin-like AI Scanner | Alternative AI Scanner |

|---|---|---|

| Detection approach | Source-based similarity with AI paraphrase checks | AI-driven detection with broader corpus and paraphrase analytics |

| Database coverage | Extensive academic repository and publisher content | Broader open-web and partner-source datasets |

| Reporting and analytics | Inline similarity score with highlighted matches | Comprehensive risk scoring with exportable reports |

| Privacy controls | Institution-aligned retention policies with encryption | Flexible privacy settings with data minimization options |

| Integration and workflow | LMS plugins, LTI, and API access | Open integrations with major LMS and SSO |

| Pricing model | Institution-wide license or per-seat licensing | Tiered or usage-based pricing with enterprise options |

| Best for | Institutions prioritizing standardized integrity checks | Departments needing broader detection and flexible privacy |

Pros

- Familiar workflow for instructors and students

- Strong baseline integrity expectations in education

- Rich, auditable reporting and feedback tools

- Solid LMS integration and vendor support

Drawbacks

- Risk of over-reliance on automated results

- Privacy and data-management considerations for submissions

- Costs can be challenging for very large cohorts

Turnitin-like AI scanners remain a strong default for academic integrity, but privacy controls and data coverage should drive vendor choice.

For many institutions, a Turnitin-like solution provides reliable detection and familiar workflows. If privacy or broader data sources are essential, evaluate alternatives with transparent data policies and flexible controls.

Common Questions

What is an AI scanner like Turnitin?

An AI scanner like Turnitin combines traditional text-matching with machine-learning analysis to detect similarity and paraphrase. It reviews submissions against extensive databases and the open web, generating reports that highlight sources and provide context for instructors. These tools are designed to support academic integrity while fitting into standard grading workflows.

An AI scanner uses text-matching plus AI checks to flag potential plagiarism and paraphrase; it provides source highlights to help instructors review submissions.

How do AI scanners differ from traditional plagiarism detectors?

Traditional detectors rely primarily on exact text matches, while AI scanners add paraphrase detection and broader data coverage. This can improve detection of rewritten content but may require more nuanced review to avoid false positives. Institutions should balance sensitivity with clear instructor review processes.

AI scanners go beyond word-for-word matching by analyzing paraphrase and context, but they still require human oversight.

What data sources do AI scanners use?

AI scanners pull from publisher databases, academic repositories, and open-web sources, with some vendors offering partner datasets. The exact mix varies by vendor and licensing. Understanding source coverage helps gauge detection breadth and potential blind spots.

They use a mix of academic sources and the open web; coverage varies by tool.

Are these tools compliant with student privacy laws?

Privacy compliance depends on data handling policies, retention settings, and how data is used for model training. Institutions should seek tools with transparent privacy terms, data minimization, and options to restrict or anonymize submissions where possible.

Privacy terms vary; look for clear data-use policies and protective controls.

Can these tools be used outside of academic settings?

Yes, some AI scanners are adaptable to corporate or professional writing review, but features and policies may be tailored for education. Check licensing, integration capabilities, and reporting that suits non-academic workflows.

Some are adaptable for non-academic settings; verify licensing and features.

What should I ask vendors during a pilot?

Ask about data retention, how paraphrase detection works, integration with your LMS, reporting formats, support SLAs, and how the tool handles student rights and opt-outs. A structured pilot helps you assess both accuracy and workflow fit.

Ask about data handling, LMS integration, reporting, and support during a pilot.

Key Takeaways

- Define data-retention policies before selecting a tool

- Benchmark accuracy with representative submissions

- Pilot LMS integration to ensure smooth workflows

- Compare total cost of ownership across departments

- Prioritize transparent, actionable reporting for instructors