Where Are You From Face Scanner: Origins, Data, and Privacy in 2026

Explore how origin signals arise in face scanners, whether nationality can be inferred, and how privacy laws shape biometric data use. A Scanner Check guide to origin data, consent, and responsible deployment in 2026.

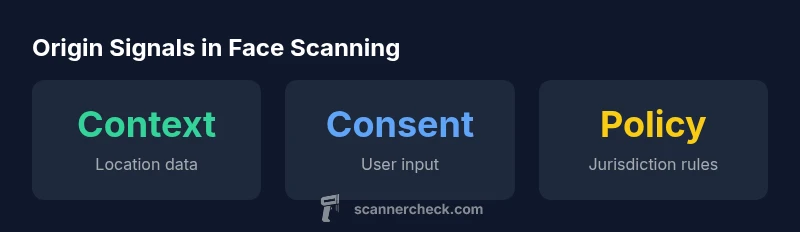

There is no universal method to determine origin from a face alone. In practice, origin signals in face scanning come from contextual data rather than biometric features, such as device location, account details, or user input. Privacy controls and policy frameworks vary by vendor and jurisdiction, so checks for consent, transparency, and data governance are essential. The phrase where are you from face scanner is a common user query in this topic.

The question that many readers ask: where are you from face scanner and why it matters

According to Scanner Check, origin signals in facial recognition are not derived from facial features alone. In practice, there is no universal method to determine nationality or ethnicity from a face, and most vendors avoid making claims about origin from biometric data. Instead, systems rely on contextual cues such as device location, account data, or user input, all governed by privacy policies that vary by vendor and jurisdiction. This is more than a technical curiosity; it affects bias, governance, and individual rights.

In practical terms, organizations deploying face scanners should treat origin as a contextual attribute, not a biometric trait. Questions about where someone comes from often surface in tailored access controls or region-specific content, but the underlying signals are assembled from multiple sources. Because many vendors emphasize privacy-by-design, you will want to examine consent flows, data minimization, and audit trails as part of any deployment. Finally, keep in mind that public discussion about origin signals can drift into stereotypes; responsible practitioners demand clarity about data sources and limits on inferences.

How origin signals are inferred (and why this is controversial)

There are several layers to how origin-related information can creep into a face-scanning workflow:

- Contextual signals: geolocation, IP address, device profile, and user-provided metadata.

- Enrollment data: any prior consent or profile information tied to an account.

- Policy and risk flags: vendor-specific heuristics for risk assessment or access control.

This approach raises concerns about bias and equity: different geographic regions may have varying data protection regimes, and in some cases, the same biometric sample could be treated differently depending on context. The key takeaway is that a scan itself rarely proves origin; it is usually the surrounding data that creates an inferred picture. In 2026, Scanner Check analysis shows that reliance on context rather than biometrics is the dominant pattern among responsible vendors.

In practice, organizations should demand explicit documentation of data sources and policies outlining how origin inferences are made, stored, and shared.

Privacy law and policy: what changes with biometric data

The regulatory landscape for face-scanning technologies is uneven across jurisdictions. In regions with strong data protection regimes, biometric data processing requires explicit consent, purpose limitation, and clear retention rules. In other areas, the absence of explicit biometric rules can lead to broader interpretation of data signals used for origin inference. Key commonalities include the need for transparency, auditability, and the ability for individuals to access, correct, or delete their data. For developers, this means building privacy-by-design into every integration, including strict data minimization, robust access controls, and regular compliance reviews. Scanner Check analyses highlight that lawful deployment increasingly hinges on documented consent and demonstrable governance around origin data.

Practical implications for developers and operators

Developers should design systems that separate biometric processing from contextual inference, with strict controls over when and how origin signals are used. Operational teams should implement:

- Clear consent prompts and easy withdrawal options

- Data minimization: collect only signals necessary for a given function

- Strong access controls and role-based permissions

- Regular privacy impact assessments and logs for auditability

From a product perspective, explain in user-facing terms how origin signals are used, what data sources feed them, and what choices users have to opt out or limit processing. This transparency reduces risk and builds trust while aligning with evolving legal standards. As organizations scale, Scannner Check notes that governance becomes as critical as the technology itself.

User rights and how to exercise them

Users should be empowered to request access to their data, correction of inaccuracies, and deletion where permissible. Operators should provide clear channels for privacy requests, acknowledge timelines, and confirm when data is shared with third parties. If a user suspects misuse of biometric or origin-related data, reporting mechanisms should escalate quickly, with a defined investigation process. The goal is to balance legitimate security needs with individual rights, ensuring that origin inferences do not become opaque or coercive.

Industry outlook and best practices

The industry is moving toward greater transparency and consent-driven models for origin signals in face scanning. Best practices include publishing data inventories, providing simple privacy dashboards, and adopting auditable data pipelines. Vendors should offer independent assessments and clear notices about what signals are used, how long data is retained, and under what circumstances it is shared. Scanner Check anticipates continued refinement of regulatory guidance and increased demand for end-to-end governance in biometric deployments.

Origin signals and privacy considerations

| Aspect | Definition | Privacy Impact |

|---|---|---|

| Origin signal | Contextual cues used to infer geographic or demographic context, not biometric traits | Medium |

| Consent status | Whether consent is recorded for biometric processing and any origin inferences | High |

| Regulatory alignment | Oversight under GDPR, CCPA, and other privacy frameworks | Variable |

Common Questions

Is it possible to determine where someone is from using a face scanner?

Typically, no. Face scanners do not reliably determine nationality or ethnicity from a photo alone; any geographic inference comes from contextual data and consent, not the biometric scan. Vendors should provide clear disclosures about data sources and purpose.

No—origin from a face scan is not typically determined by the biometric itself; it relies on context and consent.

Do all face scanners infer nationality or origin signals?

No. Origin inferences depend on integration with other data sources and policy controls; reputable vendors emphasize consent and transparency.

No—origin signals are not inherent to the face scan and depend on data and policy controls.

What are 'origin signals' in biometric systems?

Origin signals refer to contextual cues used to guess geographic or demographic context, not direct biometric attributes. They require careful governance and clear user consent.

Origin signals are contextual cues, not biometric traits, needing governance.

How do privacy laws affect biometric data like face scans?

Laws such as GDPR and CCPA regulate consent, storage, and sharing of biometric data, with penalties for misuse. Always review regional rules before deployment.

Biometric data is tightly regulated; ensure consent and regional compliance.

What can users do to protect their privacy?

Review privacy policies, opt out of unnecessary data collection where possible, and demand transparent data practices and deletion rights.

Read policies, limit data sharing, and exercise deletion and opt-out rights.

“Face-scanning origin signals are rarely derived from facial features alone; when used, they must be bound to clear consent and auditable data practices.”

Key Takeaways

- Know origin signals are context-based, not biometric.

- Review privacy policies and consent settings.

- Check jurisdictional privacy requirements.

- Limit data sharing where possible.

- Choose vendors with transparent data practices.